Machine learning projects move through complex lifecycles: sourcing data, training models, validating results, and deploying to production. Each phase demands collaboration between researchers, engineers, and business stakeholders. Without strong systems, experiments get lost, deadlines slip, and deployments stall.

That’s why leaders in AI and data science look beyond siloed tools when researching the best project management tools. They need platforms that capture documentation, track experiments, streamline approvals, and keep schedules aligned. Lark delivers this structure, ensuring ML projects stay on track from training to deployment.

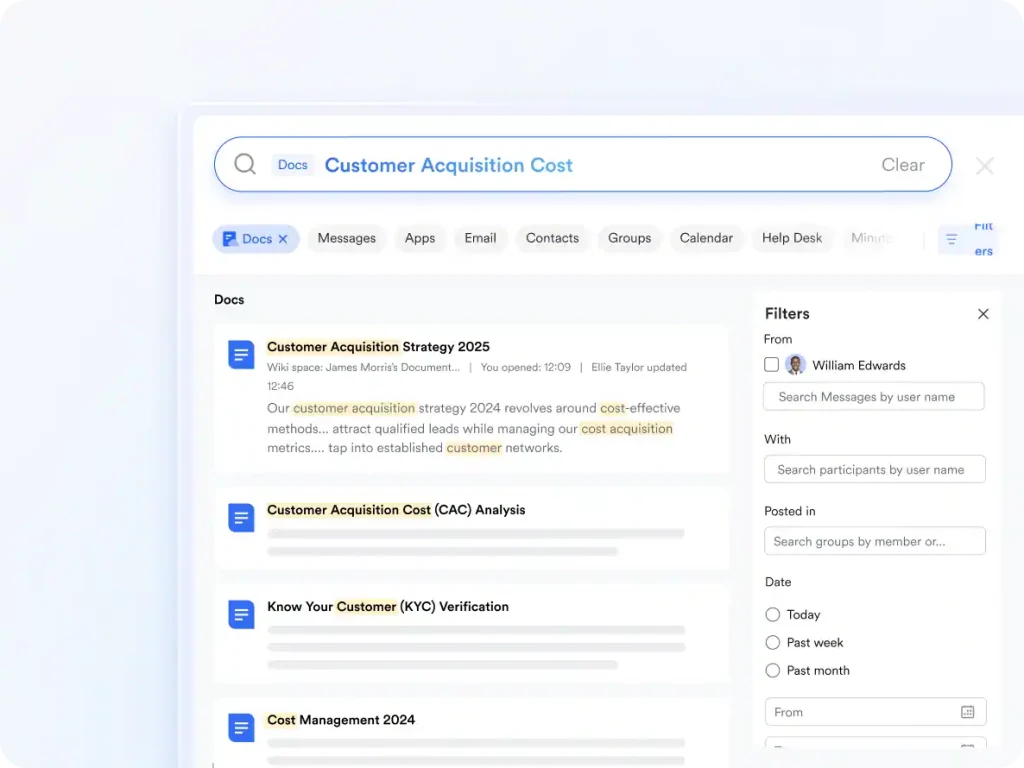

Documenting experiments with Lark Docs

Machine learning requires meticulous record-keeping. Every experiment has parameters, results, and observations that must be documented to replicate outcomes or satisfy compliance. Scattered notes make it hard for teams to learn from prior work. Lark Docs provides one space where experiment logs, technical notes, and compliance records are kept together.

Data scientists can write experiment results, engineers add deployment details, and compliance officers review against regulatory requirements. Version history keeps changes transparent, while permissions protect sensitive data. For instance, when testing a new fraud detection model, analysts log feature engineering choices in Docs, engineers record training parameters, and managers review feasibility. This creates a seamless document collaboration space that avoids repeated mistakes and accelerates iteration.

Simplifying deployment approvals with Lark Approval

Lark is an effective choice for teams needing business process management software. to handle complex workflows like deploying machine learning models. Using Lark Approval, you can streamline the entire process from submission to final sign-off. This includes securing budget approvals, compliance reviews, and vendor evaluations. The platform makes all steps transparent, logs every decision for accountability, and ensures swift approval. For example, a data science team can submit a healthcare model’s validation results, which are then reviewed and approved by compliance officers and leadership in a clear, documented workflow that helps teams meet both their deployment deadlines and their regulatory obligations.

Tracking models with Lark Base

Beyond simple documentation, machine learning projects need a structured way to track progress. Teams often struggle to monitor multiple experiments at once, juggling different datasets, model versions, and deployment tasks in fragmented spreadsheets. As a robust CRM app, Lark offers Base as a structured system that adapts to your workflow. It allows you to track everything from dataset sources to model accuracy benchmarks. With automations that remind teams of validation deadlines and automatically update deployment statuses, you can reduce manual oversight. This visibility gives leaders confidence in timelines and ensures no project detail is ever lost.

Coordinating teams with Lark Messenger

Machine learning projects involve diverse roles: data scientists, engineers, product managers, and compliance staff. Communication bottlenecks often slow progress, especially when model outputs need clarification. Lark Messenger keeps these teams aligned in real time.

Topic groups can be created for each model or project, ensuring updates remain organized. Threaded replies keep discussions tied to specific experiments, while file sharing embeds results directly in context. For example, if a data scientist notices overfitting in a model, they post results in Messenger. Engineers respond with adjustments, and managers confirm timeline impacts. Everyone stays informed, and the project continues without unnecessary delays.

Reviewing models with Lark Meetings

Collaboration is essential in ML projects. Teams meet regularly to review experiment results, validate approaches, and align on deployment readiness. If these reviews aren’t documented, valuable time is wasted repeating discussions. Lark Meetings ensures sessions lead to clear action.

After clicking AI Summary, meeting notes can be generated during calls. And after the meeting, notes will be stored automatically in Docs. At the same time, recordings can be shared in Messenger for absent stakeholders. For instance, during a model validation review, stakeholders evaluate accuracy, fairness, and production costs. Action items are documented, owners are assigned, and timelines are updated. Weeks later, the record ensures decisions are still clear, avoiding confusion during deployment.

Managing project timelines with Lark Calendar

Machine learning cycles are deadline-driven. Data preparation, training phases, and deployment windows all follow strict timelines, especially when tied to business initiatives. Missing these deadlines risks delaying product launches or compliance reporting. Lark Calendar keeps every stage visible.

Shared calendars map deadlines for training runs, validation phases, and deployment milestones. Tasks created in Lark sync automatically to Lark Calendar, ensuring preparation steps align with major goals. For example, if a financial institution is deploying a credit risk model, Calendar tracks data submission dates, validation cycles, and regulator review deadlines. This visibility keeps projects aligned with both business needs and compliance requirements.

Conclusion

Machine learning projects succeed when teams keep experiments documented, workflows structured, communication clear, and deployments accountable. With Lark Docs, Base, Messenger, Meetings, Calendar, and Approval, organizations create systems that support innovation without chaos. Models move smoothly from training to production, deadlines are met, and compliance is preserved.

At the same time, long-term ML success depends on how results are delivered to customers and partners. Many organizations support this with a Lark, ensuring predictive insights connect directly to relationship-building.